Zero To Hero In Ollama: Create Local Llm Applications

Zero To Hero In Ollama: Create Local Llm Applications

Published 9/2024

MP4 | Video: h264, 1920x1080 | Audio: AAC, 44.1 KHz

Language: English | Size: 1.29 GB | Duration: 3h 5m

Run customized LLM models on your system privately | Use ChatGPT like interface | Build local applications using Python

What you'll learn

Install and configure Ollama on your local system to run large language models privately.

Customize LLM models to suit specific needs using Ollama's options and command-line tools.

Execute all terminal commands necessary to control, monitor, and troubleshoot Ollama models

Set up and manage a ChatGPT-like interface using Open WebUI, allowing you to interact with models locally

Deploy Docker and Open WebUI for running, customizing, and sharing LLM models in a private environment.

Utilize different model types, including text, vision, and code-generating models, for various applications.

Create custom LLM models from a gguf file and integrate them into your applications.

Build Python applications that interface with Ollama models using its native library and OpenAI API compatibility.

Develop a RAG (Retrieval-Augmented Generation) application by integrating Ollama models with LangChain.

Implement tools and agents to enhance model interactions in both Open WebUI and LangChain environments for advanced workflows.

Requirements

Basic Python knowledge and a computer capable of running Docker and Ollama are recommended, but no prior AI experience is required.

Description

Are you looking to build and run customized large language models (LLMs) right on your own system, without depending on cloud solutions? Do you want to maintain privacy while leveraging powerful models similar to ChatGPT? If you're a developer, data scientist, or an AI enthusiast wanting to create local LLM applications, this course is for you!This hands-on course will take you from beginner to expert in using Ollama, a platform designed for running local LLM models. You'll learn how to set up and customize models, create a ChatGPT-like interface, and build private applications using Python—all from the comfort of your own system.In this course, you will:Install and customize Ollama for local LLM model executionMaster all command-line tools to effectively control OllamaRun a ChatGPT-like interface on your system using Open WebUIIntegrate various models (text, vision, code generation) and even create your own custom modelsBuild Python applications using Ollama and its library, with OpenAI API compatibilityLeverage LangChain to enhance your LLM capabilities, including Retrieval-Augmented Generation (RAG)Deploy tools and agents to interact with Ollama models in both terminal and LangChain environmentsWhy is this course important? In a world where privacy is becoming a greater concern, running LLMs locally ensures your data stays on your machine. This not only improves data security but also allows you to customize models for specialized tasks without external dependencies.You'll complete activities like building custom models, setting up Docker for web interfaces, and developing RAG applications that retrieve and respond to user queries based on your data. Each section is packed with real-world applications to give you the experience and confidence to build your own local LLM solutions.Why this course? I specialize in making advanced AI topics practical and accessible, with hands-on projects that ensure you're not just learning but actually building real solutions. Whether you're new to LLMs or looking to deepen your skills, this course will equip you with everything you need.Ready to build your own LLM-powered applications privately? Enroll now and take full control of your AI journey with Ollama!

Overview

Section 1: Introduction

Lecture 1 Introduction

Lecture 2 Installing and Setting up Ollama

Lecture 3 Model customizations and other options

Lecture 4 All Ollama Command Prompt/ Terminal commands

Section 2: Open WebUI - ChatGPT like interface for Ollama models

Lecture 5 Introduction to Open WebUI

Lecture 6 Setting up Docker and Open WebUI

Lecture 7 Open WebUI features and functionalities

Lecture 8 Getting response based on documents and websites

Lecture 9 Open WebUI user access control

Section 3: Types of Models and their capabilities

Lecture 10 Types of Ollama models

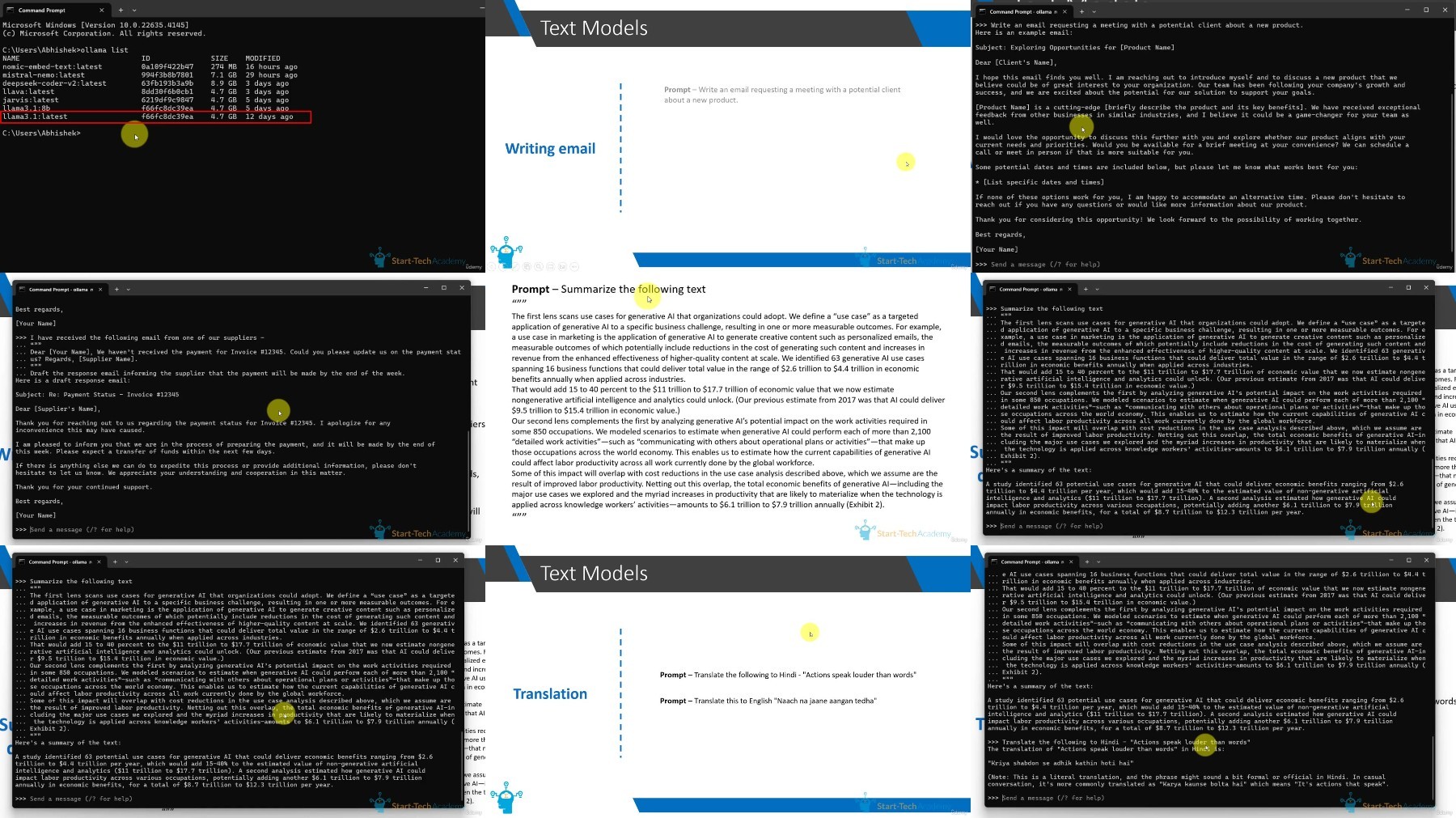

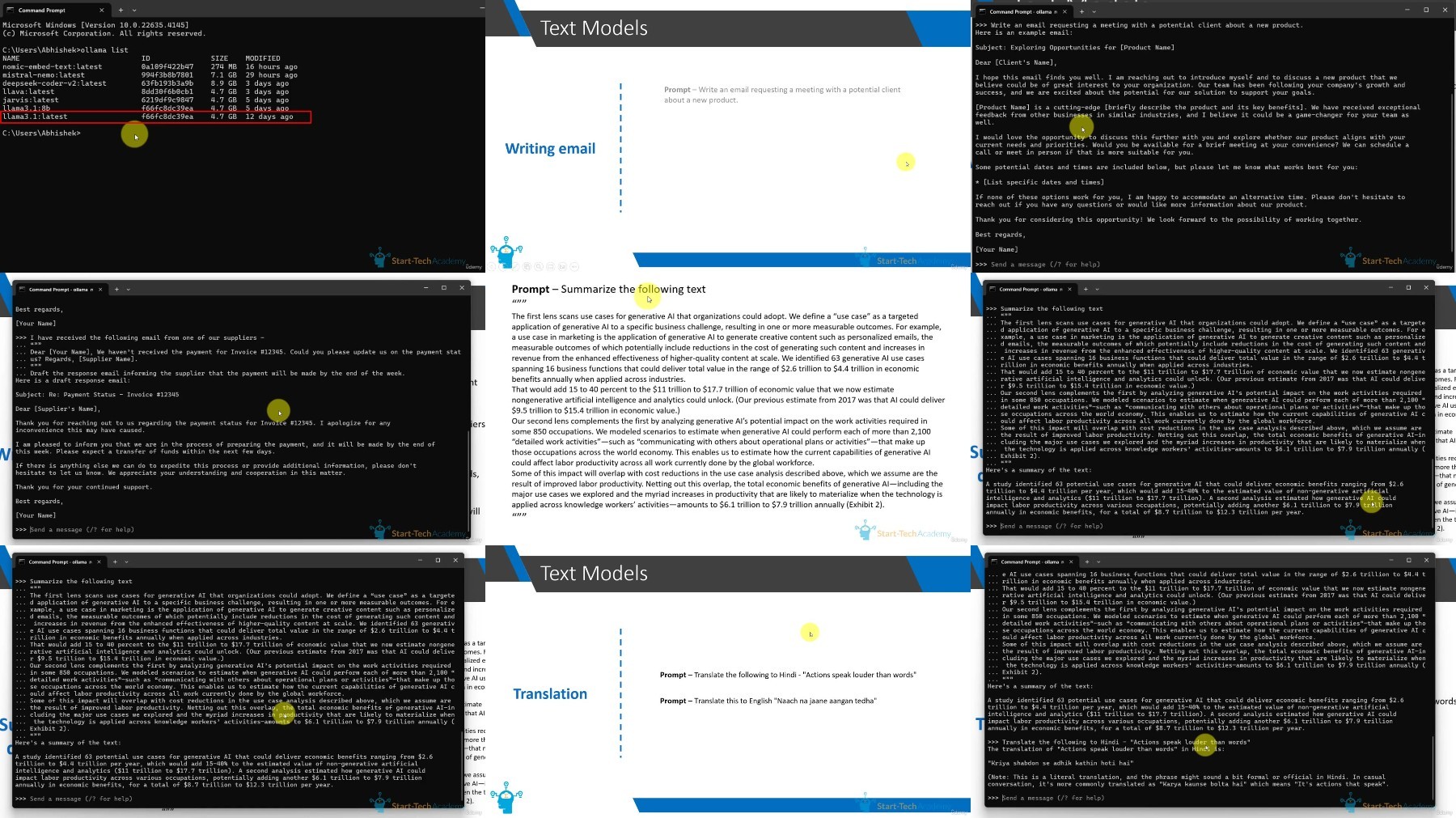

Lecture 11 Text models

Lecture 12 Vision models

Lecture 13 Code generating models

Lecture 14 Create custom model from gguf file

Section 4: Using Ollama with Python

Lecture 15 Installing and Setting up Python environment

Lecture 16 Using Ollama in Python using Ollama library

Lecture 17 Calling Model using API and OpenAI compatibility

Section 5: Using Ollama with LangChain in Python

Lecture 18 What is LangChain and why are we using it?

Lecture 19 Basic modules of Langchain

Section 6: Creating RAG application using Ollama and LangChain

Lecture 20 Understanding the concept of RAG (Retrieval Augmented Generation)

Lecture 21 Loading, Chunking and Embedding document using LangChain and Ollama

Lecture 22 Answering user question with retrieved information

Section 7: Using Tools and Agents with Ollama models

Lecture 23 Understanding Tools and Agents

Lecture 24 Tools and Agents using LangChain and Llama3.1

Section 8: Conclusion

Lecture 25 About your certificate

Lecture 26 Bonus lecture

AI enthusiasts who want to build and run customized LLM models privately on their local systems.,Python developers seeking to integrate large language models into local applications for enhanced functionality.,Data scientists who aim to create secure, private LLM-powered tools without relying on cloud-based solutions.,Machine learning engineers looking to explore and customize open-source models using Ollama and LangChain.,Tech professionals who want to develop RAG (Retrieval-Augmented Generation) applications using local data.,Privacy-conscious developers interested in running AI models with full control over data and environment.

https://ddownload.com/w1dc0h6telcb/.Zero.to.Hero.in.Ollama.Create.Local.LLM.Applications.2024-10.rar

What you'll learn

Install and configure Ollama on your local system to run large language models privately.

Customize LLM models to suit specific needs using Ollama's options and command-line tools.

Execute all terminal commands necessary to control, monitor, and troubleshoot Ollama models

Set up and manage a ChatGPT-like interface using Open WebUI, allowing you to interact with models locally

Deploy Docker and Open WebUI for running, customizing, and sharing LLM models in a private environment.

Utilize different model types, including text, vision, and code-generating models, for various applications.

Create custom LLM models from a gguf file and integrate them into your applications.

Build Python applications that interface with Ollama models using its native library and OpenAI API compatibility.

Develop a RAG (Retrieval-Augmented Generation) application by integrating Ollama models with LangChain.

Implement tools and agents to enhance model interactions in both Open WebUI and LangChain environments for advanced workflows.

Requirements

Basic Python knowledge and a computer capable of running Docker and Ollama are recommended, but no prior AI experience is required.

Description

Are you looking to build and run customized large language models (LLMs) right on your own system, without depending on cloud solutions? Do you want to maintain privacy while leveraging powerful models similar to ChatGPT? If you're a developer, data scientist, or an AI enthusiast wanting to create local LLM applications, this course is for you!This hands-on course will take you from beginner to expert in using Ollama, a platform designed for running local LLM models. You'll learn how to set up and customize models, create a ChatGPT-like interface, and build private applications using Python—all from the comfort of your own system.In this course, you will:Install and customize Ollama for local LLM model executionMaster all command-line tools to effectively control OllamaRun a ChatGPT-like interface on your system using Open WebUIIntegrate various models (text, vision, code generation) and even create your own custom modelsBuild Python applications using Ollama and its library, with OpenAI API compatibilityLeverage LangChain to enhance your LLM capabilities, including Retrieval-Augmented Generation (RAG)Deploy tools and agents to interact with Ollama models in both terminal and LangChain environmentsWhy is this course important? In a world where privacy is becoming a greater concern, running LLMs locally ensures your data stays on your machine. This not only improves data security but also allows you to customize models for specialized tasks without external dependencies.You'll complete activities like building custom models, setting up Docker for web interfaces, and developing RAG applications that retrieve and respond to user queries based on your data. Each section is packed with real-world applications to give you the experience and confidence to build your own local LLM solutions.Why this course? I specialize in making advanced AI topics practical and accessible, with hands-on projects that ensure you're not just learning but actually building real solutions. Whether you're new to LLMs or looking to deepen your skills, this course will equip you with everything you need.Ready to build your own LLM-powered applications privately? Enroll now and take full control of your AI journey with Ollama!

Overview

Section 1: Introduction

Lecture 1 Introduction

Lecture 2 Installing and Setting up Ollama

Lecture 3 Model customizations and other options

Lecture 4 All Ollama Command Prompt/ Terminal commands

Section 2: Open WebUI - ChatGPT like interface for Ollama models

Lecture 5 Introduction to Open WebUI

Lecture 6 Setting up Docker and Open WebUI

Lecture 7 Open WebUI features and functionalities

Lecture 8 Getting response based on documents and websites

Lecture 9 Open WebUI user access control

Section 3: Types of Models and their capabilities

Lecture 10 Types of Ollama models

Lecture 11 Text models

Lecture 12 Vision models

Lecture 13 Code generating models

Lecture 14 Create custom model from gguf file

Section 4: Using Ollama with Python

Lecture 15 Installing and Setting up Python environment

Lecture 16 Using Ollama in Python using Ollama library

Lecture 17 Calling Model using API and OpenAI compatibility

Section 5: Using Ollama with LangChain in Python

Lecture 18 What is LangChain and why are we using it?

Lecture 19 Basic modules of Langchain

Section 6: Creating RAG application using Ollama and LangChain

Lecture 20 Understanding the concept of RAG (Retrieval Augmented Generation)

Lecture 21 Loading, Chunking and Embedding document using LangChain and Ollama

Lecture 22 Answering user question with retrieved information

Section 7: Using Tools and Agents with Ollama models

Lecture 23 Understanding Tools and Agents

Lecture 24 Tools and Agents using LangChain and Llama3.1

Section 8: Conclusion

Lecture 25 About your certificate

Lecture 26 Bonus lecture

AI enthusiasts who want to build and run customized LLM models privately on their local systems.,Python developers seeking to integrate large language models into local applications for enhanced functionality.,Data scientists who aim to create secure, private LLM-powered tools without relying on cloud-based solutions.,Machine learning engineers looking to explore and customize open-source models using Ollama and LangChain.,Tech professionals who want to develop RAG (Retrieval-Augmented Generation) applications using local data.,Privacy-conscious developers interested in running AI models with full control over data and environment.

https://ddownload.com/w1dc0h6telcb/.Zero.to.Hero.in.Ollama.Create.Local.LLM.Applications.2024-10.rar